TinyFish Web Agents Outperform OpenAI and Anthropic on Hard Web Tasks, Company Releases All 300 Benchmark Runs Publicly

Translate this article

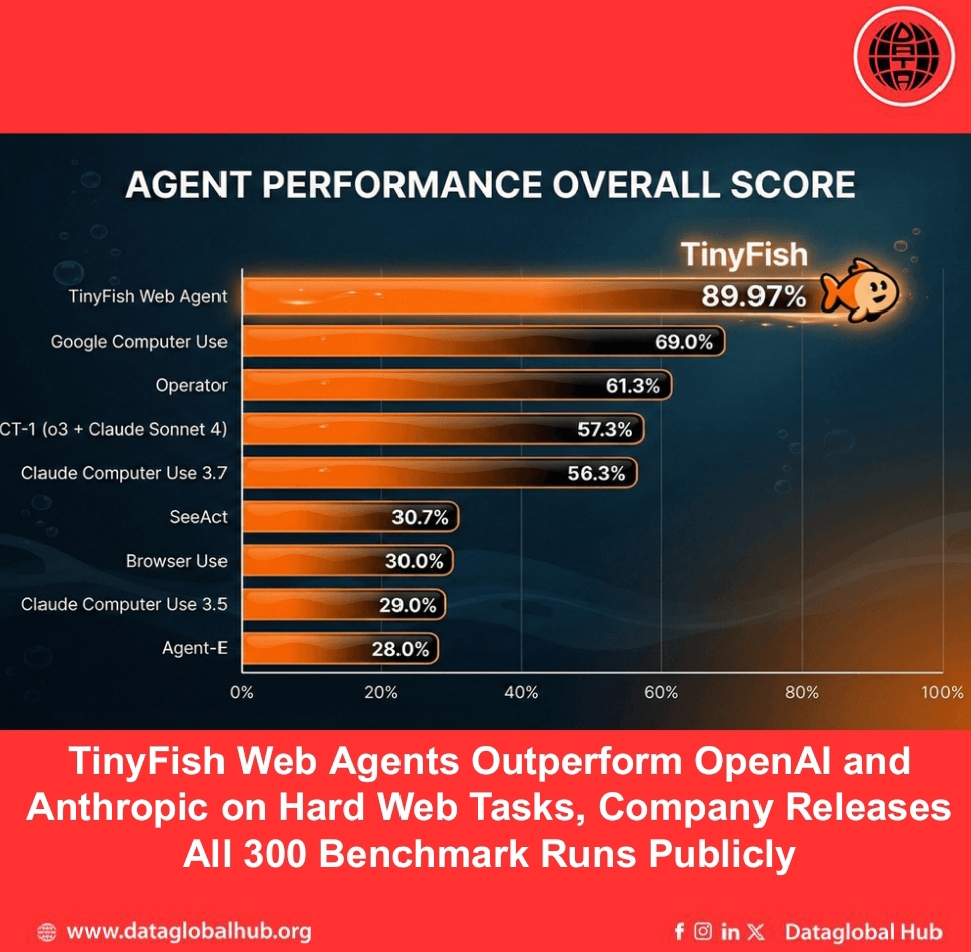

TinyFish has published benchmark results showing its web agents achieving 90% accuracy on the Mind2Web evaluation, a 29-point lead over OpenAI's Operator and a 34-point lead over Anthropic's Claude Computer Use. The company released all 300 individual task runs publicly, including every failure, with full execution traces viewable online.

The Mind2Web benchmark tests agents on 300 tasks across 136 live websites, with three difficulty levels. On hard tasks—defined as multi-step workflows requiring ten or more interactions—TinyFish scored 81.9 percent. Operator scored 43.2 percent on the same tasks. Claude Computer Use dropped to 32.4 percent, and Browser Use fell to 8.1 percent.

Why the Gap Widens on Hard Tasks

The company attributes the performance difference to how it handles compounding errors. Hard tasks require many sequential steps; a system with 95 percent per-step accuracy succeeds 86 percent of the time on three-step tasks but only 60 percent on ten-step tasks. At 90 percent per-step accuracy, the ten-step success rate drops to 35 percent.

TinyFish's 16-point drop from easy to hard tasks indicates its system handles compounding well. By contrast, the 58-point drop for Claude Computer Use suggests its easy-task scores were not predictive of real-world performance.

Architecture: Reasoning Layer Plus Execution Layer

Standard web agents screenshot pages and query frontier models for each click—a process that takes one to five seconds per step and suffers from inconsistency. TinyFish splits the problem. A reasoning layer using large models handles the 20 to 30 percent of steps requiring actual judgment: understanding page intent, interpreting unusual layouts, choosing between valid paths. An execution layer using small, task-specific models handles mechanical actions—clicking date pickers, selecting dropdowns, submitting forms, paginating—in milliseconds rather than seconds.

The infrastructure layer manages proxy rotation, browser fingerprinting, geographic routing, and bot detection evasion. All were active during the benchmark runs. In one documented case, a task that initially failed on Kaggle due to anti-bot blocks automatically reconfigured to a different proxy on a subsequent run and passed Cloudflare without human intervention.

The 40 Failures, Documented

The 40 failed tasks break down into three categories. Twelve failures were caused by anti-bot blocks; apartments.com alone accounted for eight of these. Four failures stemmed from unsupported UI interactions: slider widgets on Chase retirement calculators, drag-and-drop on chess.com, and locating specific images in Imgur's meme creator. Twenty-four failures were edge cases: filter application issues on Thumbtack, missed navigation steps on Eventbrite, and various search and filter problems across sites including Samsung, Best Buy, and Healthline. Every failure has a public execution trace.

Open-Source Cookbook Launches

Alongside the benchmark release, TinyFish published the TinyFish Cookbook on GitHub, a collection of sample applications and automations built with the platform. Each folder contains a standalone project with documentation, and contributions are open under an MIT license. Recipes include an anime watch hub for finding free manga sites, a sports betting odds comparison tool, a competitive pricing intelligence dashboard, a loan comparison tool across banks, a logistics intelligence platform for port congestion tracking, a manga availability finder across reading platforms, an open-box deal aggregator spanning eight retailers, an academic research co-pilot for ArXiv and PubMed, a scholarship discovery system pulling from official websites, and a travel stay search tool for conventions and events.

The Platform

TinyFish offers an API that treats websites as programmable surfaces. Users send a natural language goal and URLs, and the API returns structured JSON, handling navigation, forms, filters, dynamic content, and multi-step flows across sites in parallel. Customers cited include Google, DoorDash, and ClassPass.

The company also announced the TinyFish Accelerator, a nine-week virtual program backed by a $2 million seed pool from Mango Capital, offering funding, free credits, engineering support, and business mentorship for founders building on what it calls the "Agentic Web." Applications opened February 17 with rolling admissions.

All benchmark data, execution traces, and code are available on GitHub and the company's website. https://www.tinyfish.ai/blog/mind2web

Recent Articles

Subscribe to Newsletter

Enter your email address to register to our newsletter subscription!